Problems like this have been recently formalized in the ICDAR DeTEXT Text Extraction From Biomedical Literature Figures challenge.

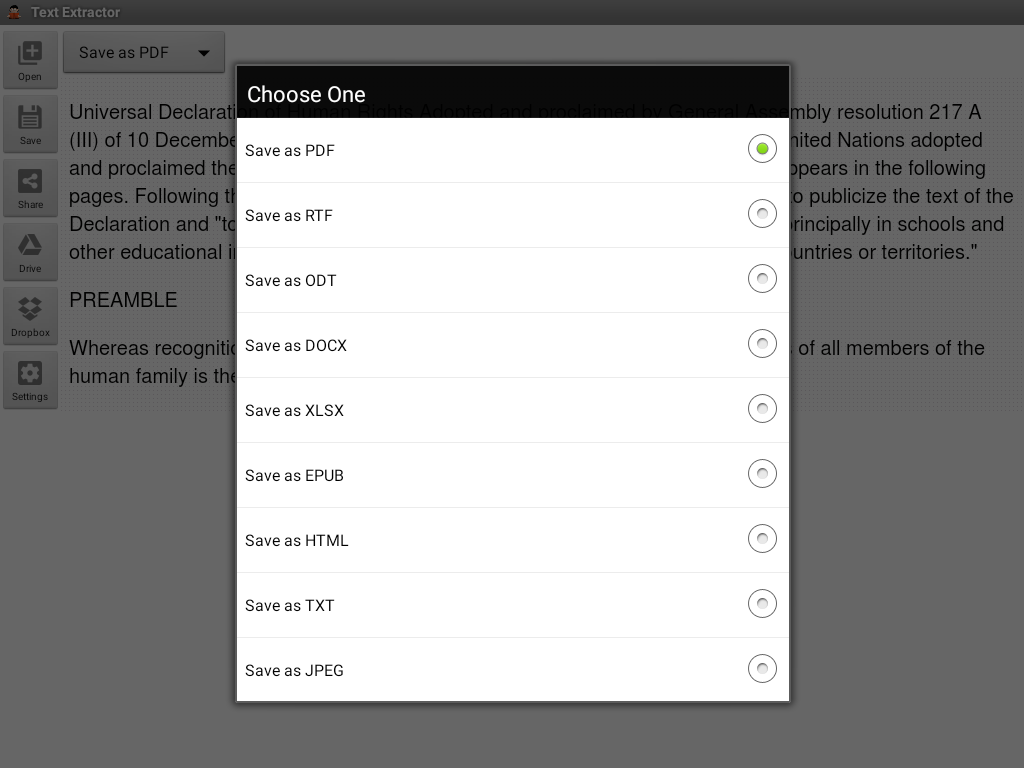

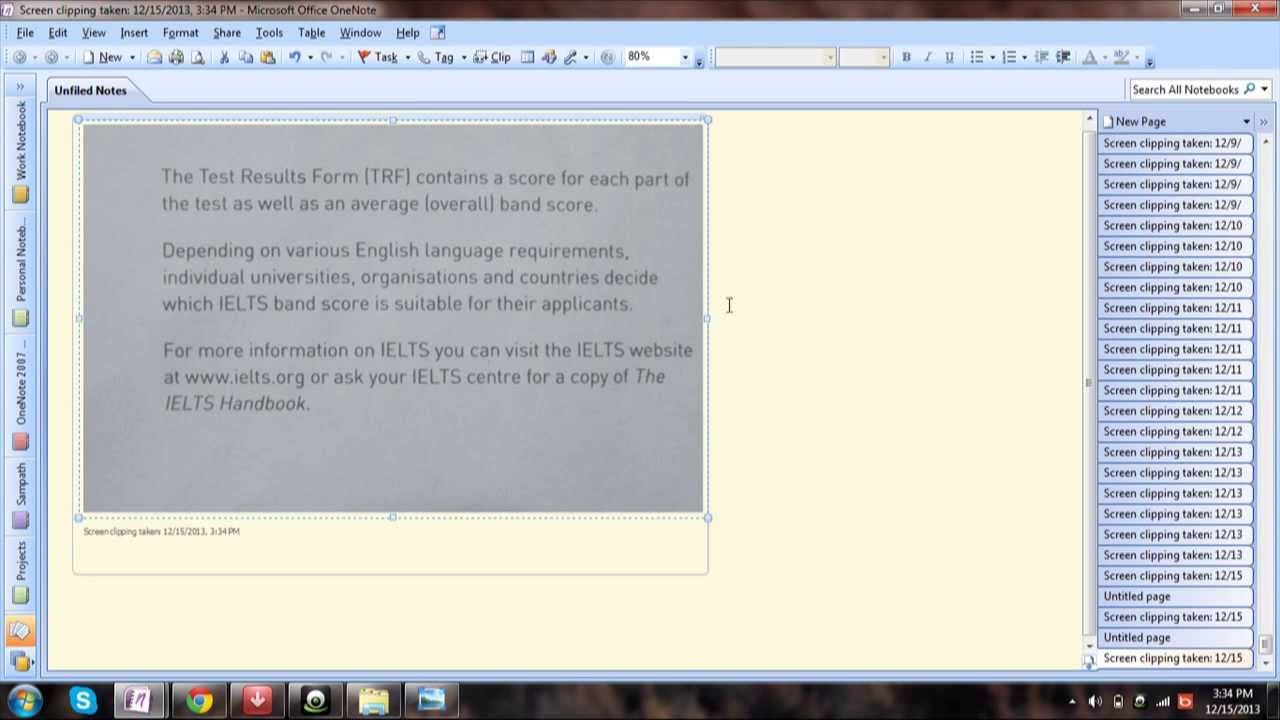

In contrast to documents with a global layout (such as a letter, a page from a book, a column from a newspaper), many types of documents are relatively unstructured in their layout and have text elements scattered throughout (such as receipts, forms, and invoices). Another area that poses similar challenges is in text extraction from images of complex documents. Problems of this nature are formalized in the COCO-Text challenge, where the goal is to extract text that might be included in road signs, house numbers, advertisements, and so on. An example might be in detecting arbitrary text from images of natural scenes. There are, however, many use cases in what we might call non-traditional OCR where these existing generic solutions are not quite the right fit. It is an exciting time in the field, as computer vision techniques are becoming widely available to empower many use cases. These include GoogleVision, AWS Textract, Azure OCR, and Dropbox, among others. More recently, cloud service providers are rolling out text detection capabilities alongside their various computer vision offerings. Tesseract provides an easy-to-use interface as well as an accompanying Python client library, and tends to be a go-to tool for OCR-related projects. A popular open source tool for OCR is the Tesseract Project, which was originally developed by Hewlett-Packard but has been under the care and feeding of Google in recent years. When documents are clearly laid out and have global structure (for example, a business letter), existing tools for OCR can perform quite well. The challenge of extracting text from images of documents has traditionally been referred to as Optical Character Recognition (OCR) and has been the focus of much research. In this post, we’ll describe a multi-task convolutional neural network that we developed in order to efficiently and accurately extract text from images of documents. Examples might include receipts, invoices, forms, statements, contracts, and many more pieces of unstructured data, and it’s important to be able to quickly understand the information embedded within unstructured data such as these.įortunately, recent advances in computer vision allow us to make great strides in easing the burden of document analysis and understanding. Subscriptions may be managed by the user and auto-renewal may be turned off by going to the user’s Account.Like many companies, not least financial institutions, Capital One has thousands of documents to process, analyze, and transform in order to carry out day-to-day operations. Subscription automatically renews unless auto-renew is turned off at least 24-hours before the end of the current periodĪccount will be charged for renewal within 24-hours prior to the end of the current period, and identify the cost of the renewal Try to make the picture fill the shooting screen.Sufficient light, avoid shaking, take pictures clearly.To ensure accurate identification, when taking pictures: To ensure accurate identification, when taking pictures:.Share files for commenting or viewing in WhatsApp, iMessage, Microsoft Teams.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed